[German]Security researchers at Check Point Research have identified critical vulnerabilities (CVE-2025-59536, CVE-2026-21852) in Anthropic's AI-based coding tool "Claude Code." These vulnerabilities allowed remote code execution and the theft of API credentials. This is critical because attackers could abuse built-in mechanisms such as hooks, Model Context Protocol (MCP) integrations, and environment variables. Attackers could execute arbitrary shell commands and exfiltrate API keys when developers cloned and opened untrusted projects. Beyond launching the tool, no further action was required by the user.

[German]Security researchers at Check Point Research have identified critical vulnerabilities (CVE-2025-59536, CVE-2026-21852) in Anthropic's AI-based coding tool "Claude Code." These vulnerabilities allowed remote code execution and the theft of API credentials. This is critical because attackers could abuse built-in mechanisms such as hooks, Model Context Protocol (MCP) integrations, and environment variables. Attackers could execute arbitrary shell commands and exfiltrate API keys when developers cloned and opened untrusted projects. Beyond launching the tool, no further action was required by the user.

Analysis: When configuration becomes execution

Traditionally, repository configuration files were considered passive metadata that merely defined operating parameters, writes Check Point Research (CPR) in its analysis. With the advent of AI-powered agent tools such as Claude Code, this has changed fundamentally. These tools are designed to autonomously optimize workflows, blurring the line between configuration and code execution.

In their analysis, security researchers at Check Point Research (CPR) identified three specific categories in which these boundaries were exploited:

1. Hidden command execution via Claude hooks

Claude Code has automation features, known as hooks, that trigger predefined actions when a session is started. The security researchers were able to demonstrate that this mechanism can be exploited to automatically launch arbitrary shell commands as soon as a developer navigates to a project directory. Since this happens when the tool is initialized, the user has no time to notice or prevent the activity.

2. Bypassing MCP consent (CVE-2025-59536)

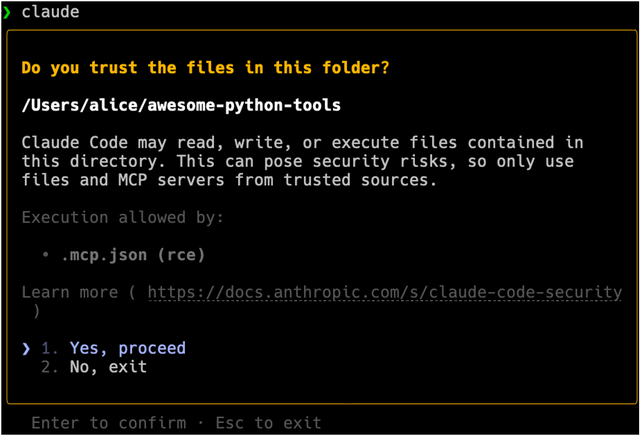

The tool uses the Model Context Protocol (MCP) to integrate external services when opening a project. Claude Code actually requires explicit user consent before initializing such services. Nevertheless, the researchers found that repository-controlled settings can override this security prompt. This causes software to be launched before trust has been established. This shifts control from the user to the (potentially malicious) repository.

Figure: User consent dialog for initializing MCP servers (Source: Screenshot from Check Point Software Technologies Ltd. in Claude Code)

3. API key theft prior to trust confirmation (CVE-2026-21852)

The most serious vulnerability concerns the manipulation of API communication. Through a targeted configuration change, all API traffic, including the complete authorization header with the developer's active API key, could be redirected to an attacker's server. This happened as soon as the directory was opened, even before the user could make a decision about the trustworthiness of the project.

Impact: The risk beyond the desktop

In modern cloud development environments, a compromised API key is much more than just the loss of a single access point. Anthropic's "Workspaces" feature allows multiple keys to share access to the same cloud-based project files. With a stolen key, an attacker can:

- Access all shared project files within the workspace.

- Modify or delete data stored in the cloud.

- Upload malicious content to existing repositories.

- Incure unexpected and potentially enormous API usage costs.

This means that a single mistake by a developer (cloning a repository) can immediately escalate into a team-wide or business-critical incident.

A new threat model for the AI supply chain

I feel sick when I see security reports like this. They tear down everything related to cybersecurity in order to enthusiastically plunge "us" into ruin (AI development is the future).

Check Point Research worked closely with Anthropic to address these vulnerabilities as part of a responsible disclosure process. Anthropic promptly implemented the following measures:

- User consent prompts were significantly strengthened.

- Prevention of external tools from running without explicit permission.

- Blocking all API communication until the user has verified the project directory.

At the end of the day, Anthropic did fully resolve all reported issues before Check Point Research made them public. However, the results of Check Point Research's analysis underscore a structural change in cybersecurity.

The risk is no longer limited to a user actively executing malicious code. In a world of AI-powered automation, the risk begins as soon as a project is opened. As AI tools increasingly execute commands autonomously, initialize external integrations, and initiate network communications, configuration files are becoming the new primary target for supply chain attacks.

Oded Vanunu, Head of Product Vulnerability Research at Check Point, sums up the bottom line: "This research highlights a fundamental shift in how we need to think about risk in the age of AI. AI development tools are no longer just peripheral aids; they are becoming infrastructure themselves. As layers of automation gain the ability to influence the execution and behavior of the environment, the boundaries of trust are shifting. Companies driving the adoption of AI must ensure that their security models evolve at the same pace."

Further information, including a technical analysis of the vulnerabilities, can be found in the CPR Blog postCaught in the Hook: RCE and API Token Exfiltration Through Claude Code Project Files | CVE-2025-59536 | CVE-2026-21852 dated February 25, 2026.